线稿上色V3(比V2差别在于这个参考图的处理方式),并且更好用哦

更新时间:2026-03-26 15:42:43

-

-

taptap官方最新客户端2026

- 类型:生活服务

- 大小:28.8m

- 语言:简体中文

- 评分:

- 查看详情

线稿上色V3(比V2差别在于这个参考图的处理方式),并且更好用哦

本文探讨了基于参考图的线稿上色技术,并通过分析相关论文,结合小图片在A的模型训练,重点研究了模糊处理对色彩参考图的影响。实验中我们比较了几种不同的模糊参数和遮盖情况,展示了模糊操作如何有效去除纹理信息,同时记录了损失(loss)、代码及测试效果等数据。

第三版基于参考图上色

事先说清楚啊,这个我的V2线稿上色差距还是有的,我可不是水项目啊

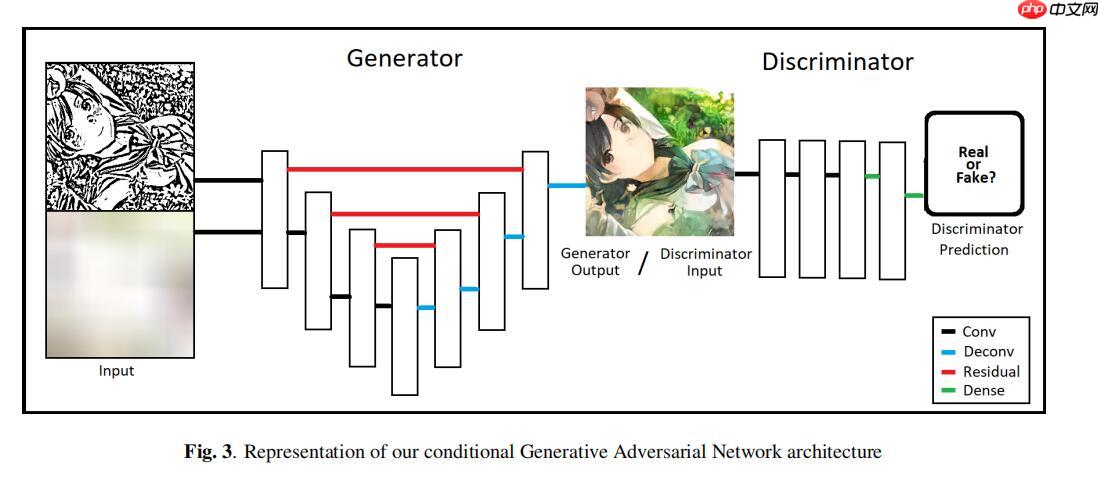

基于论文MANGAN: 通过条件GAN辅助漫画角色概念艺术的着色(未找到原始代码)。我们聚焦于理解该研究并实现其核心技术,无需实际使用代码即可深入剖析实验过程。此项目包含详细描述和实施步骤,适用于深入了解概念艺术与数字渲染相结合的技术。

注意,特别强调,本项目为了获得最佳体验,选用的图片均为小。尽管V版本中的小图片可能有些许小,但我觉得那些还是有一些遗憾的。经过A练时左右(大约需要时),结果表明A表现真的非常好,它提供了B的显存,性能真是提升了不少。

1. 论文价值讲解

本论文有点惨,怎么说呢?就是它没有成对数据集(上色动漫图和线稿图一一对应),哈哈哈。于是它就首先解决这个数据集的问题。

论文的描述:

在文献中未提供关于漫画线稿着色的具体数据集(线条艺术与色彩艺术的对照),相关工作数据集也无法利用,这要求我们必须建立一个专门的数据集来进行测试。我们从“safebooru”网站上爬取了大量的彩色漫画/动漫人物作品图像。通过去除重复的和未着色的图片,最终整理出图像。

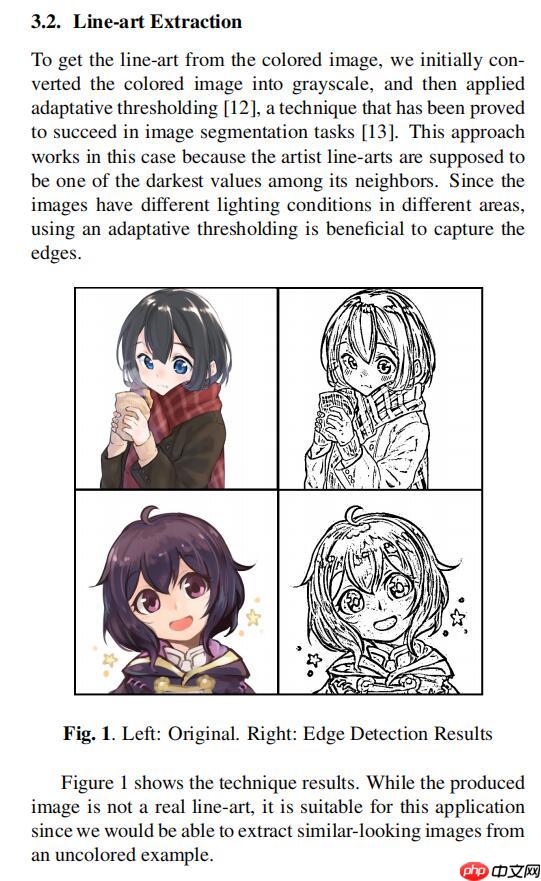

为了从彩色图像中获取清晰的线稿图,我们首先将彩色图像转换为灰度图,并采用自适应阈值分割技术[,这种方法已成功应用于图像分割任务[。在提取线条时,自适应阈值法尤为有效,因为艺术家的笔触通常是最暗的部分。由于不同区域的光照条件各异,使用自适应阈值法能够更好地捕捉边缘细节。

模型接收到的色彩信息

我们能够观察到,Hint颜色提示在描绘动漫图像色彩方面几乎毫无作用。特别是在精确描述小区域的颜色时,它显得力不从心。这一特性尤其适用于我们的应用程序,因为如果我们希望避免人工地为每一个区域指定特定的色调,这无疑将是一个巨大的负担。

模型架构图

论文也没啥其他值得学习的地方了,散会。

这个方法看起来行不通,那我就来个试验吧。之前我在SCFT线上色时已经练习过类似的效果了,现在这个项目的参考图片中颜色信息处理比较复杂,正好可以用这种方法试试效果,就这样吧。

注意,本项目的重点在于检验如何模糊色彩参考图的这一步骤的有效性。虽然我并没有仔细阅读论文的具体网络框架和代码细节,但我决定将其替换为SCFT,并在代码中应用了更多技巧。我会逐步解释并分析这些代码,希望你能认真地看一看!

# 解压数据集,只需执行一次import osif not os.path.isdir("./data/d"): os.mkdir("./data/d") ! unzip -qo data/data128161/archive.zip -d ./data/d登录后复制

2. 参考图的制作

因为通常这种角色位于图像的中轴线附近,所以下面这个代码块中的 `randx` 设计得非常巧妙,随机生成白色方块来遮挡其位置。接着应用模糊效果以减少细节对比,并通过微调进行轻微变形调整,使模型在训练过程中不容易过拟合。

2.1 来点遮盖和模糊

In [2]

import cv2import matplotlib.pyplot as pltimport numpy as npfrom random import randint file_name = "data/d/data/train/10007.png"cimg = cv2.cvtColor(cv2.imread(file_name,1),cv2.COLOR_BGR2RGB) cimg = cimg[:,:512,:]for i in range(30): randx = randint(50,400) randy = randint(0,450) cimg[randx:randx+50,randy:randy+50] = 255 #将像素设置成255,为白色blur = cv2.blur(cimg,(100,100)) plt.figure(figsize=(40,20)) plt.axis("off") plt.subplot(131) plt.imshow(cimg) plt.title("img1") plt.subplot(132) plt.imshow(blur) plt.title("img2") cimg.shape登录后复制

(512, 512, 3)登录后复制登录后复制登录后复制

2.2 来点扭曲

为什么模糊还不够?我想加入一些扭曲!原因是我是个严谨的男子汉。虽然已经有了模糊操作,但为了确保结果的准确性,我在训练时也加入了扭曲调整,这样可以更精确地避免干扰和误判,使得模型的表现更加出色。[

def AffineTrans(img): randx= randint(- randx= randint(- randy= randint(- randx= randint(- randy= randint( rows, cols = img.shape[:- pts= np.float[[randx , [randx , [ randy]) # 源图像中的三角形顶点坐标 pts= np.float[[ , [ randy, [randx ]) # 目标图像中的三角形顶点坐标 M = cvgetAffineTransform(pts pts # 计算出仿射变换矩阵 dst = cvwarpAffine(img, M, (cols, rows), borderValue=( ) # 应用仿射变换 return dstfile_name = data/d/data/train/png cimg = cvcvtColor(cvimread(file_name, , cvCOLOR_BGRGB) cimg = cimg[:, ::] for i in range(: randx = randint( randy = randint( cimg[randx:randx+ randy:randy+ = ffine_img = AffineTrans(cimg)plt.figure(figsize= plt.axis(off) plt.subplot( plt.imshow(cimg) plt.title(img)plt.subplot( plt.imshow(affine_img) plt.title(img)

(512, 512, 3)登录后复制登录后复制登录后复制

<Figure size 2880x1440 with 2 Axes>登录后复制登录后复制

2.3 最终参考图效果

In [4]

import cv2import matplotlib.pyplot as pltimport numpy as npfrom random import randint file_name = "data/d/data/train/10007.png"cimg = cv2.cvtColor(cv2.imread(file_name,1),cv2.COLOR_BGR2RGB) cimg = cimg[:,:512,:]for i in range(30): randx = randint(50,400) randy = randint(0,450) cimg[randx:randx+50,randy:randy+50] = 255 #将像素设置成255,为白色affine_img = AffineTrans(cimg) blur = cv2.blur(affine_img,(100,100)) plt.figure(figsize=(40,20)) plt.axis("off") plt.subplot(131) plt.imshow(cimg) plt.title("img1") plt.subplot(132) plt.imshow(blur) plt.title("img2") cimg.shape登录后复制

(512, 512, 3)登录后复制登录后复制登录后复制

<Figure size 2880x1440 with 2 Axes>登录后复制登录后复制

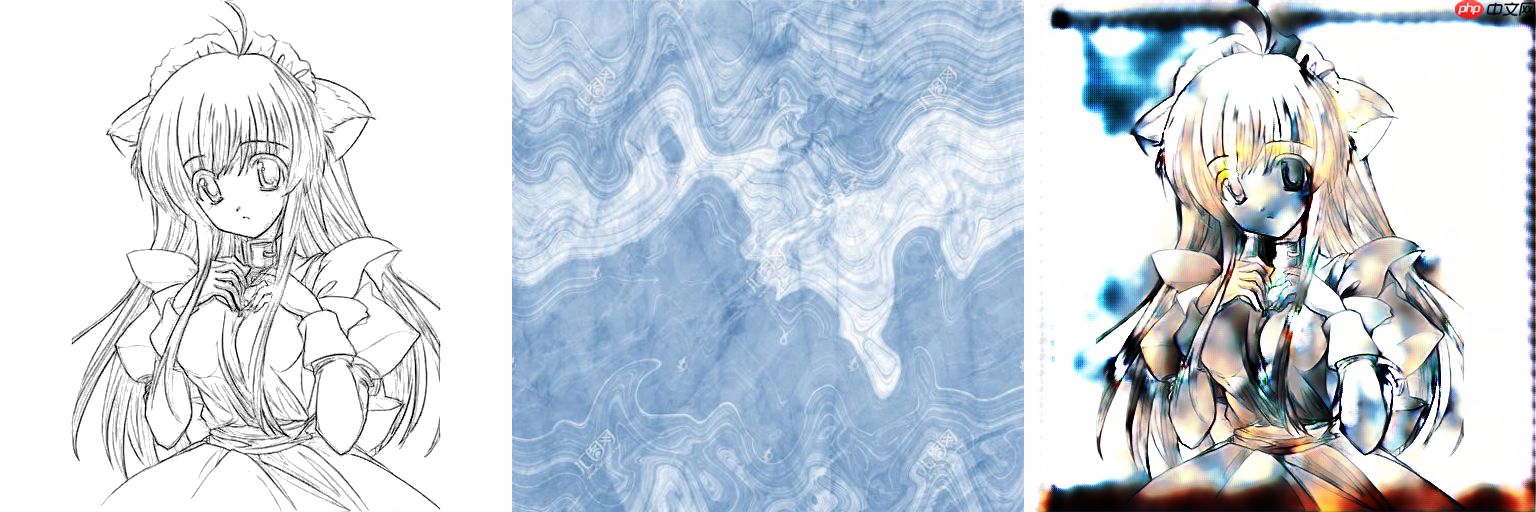

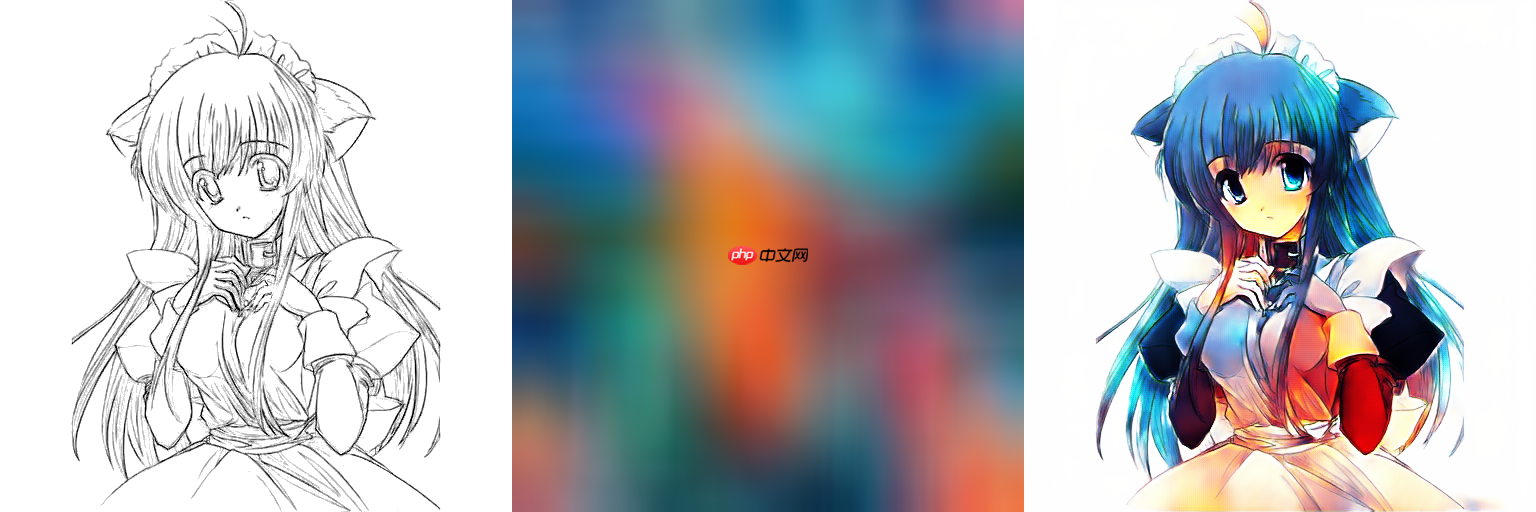

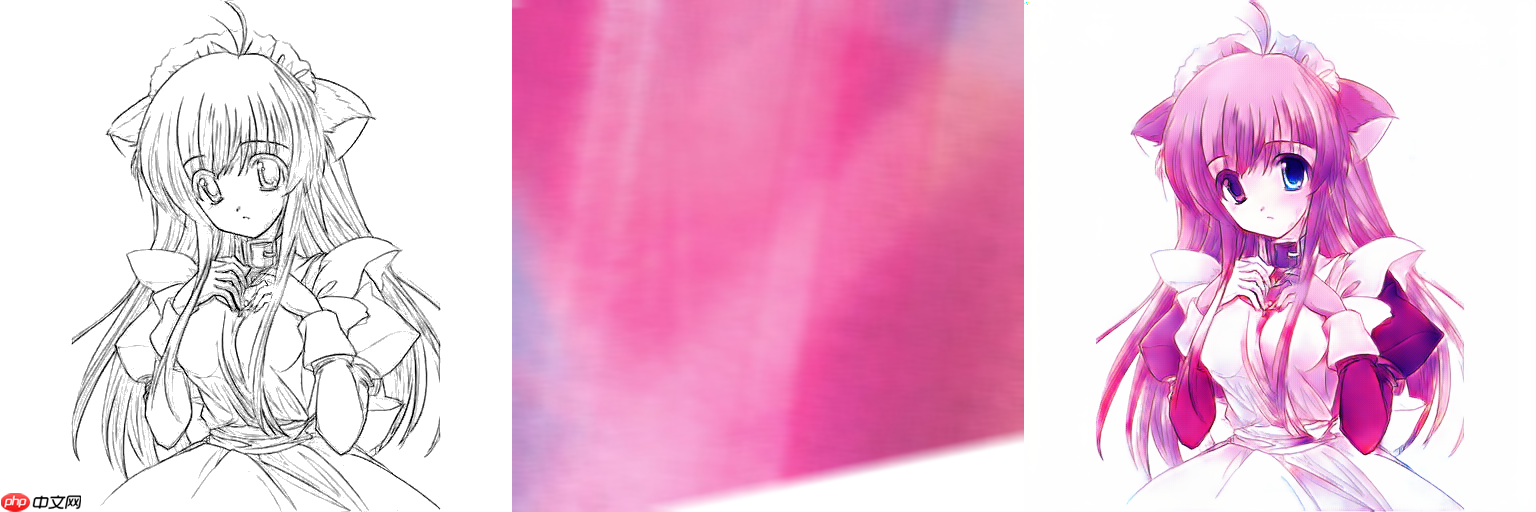

3. 接下来,给大家展示训练完成后实际测试效果

相当于消融实验对比,实际证明了模糊操作的NB之处,模糊可以直接让色彩参考图失去纹理信息,这是一个无参的方式,但却如此好用,我们不需要通过网络去提取一张色彩参考原图的色彩信息然后把纹理信息忽视,现在我直接给网络的色彩参考图就没有结构信息,这给网络减少了多少负担?这就是简单且好用的方法。

从左至右分别为线稿、颜色参考图和Ground Truth。注意我的参考图是经过cvblur(img, (smooth_size, smooth_size))模糊处理过的,其中smooth_size分别设为三行的色彩参考图未采用小方块遮盖的方法。

下面这一行的效果是cv2.blur(img,(smooth_size =100,smooth_size =100)),并且随机把30个50*50区域方块像素变白

接着展示的是一个预处理的线稿效果,看起来像是部分地方有叠层感,但整体表现尚可。

下面这两个是正常的效果,一个经过了块状像素填充白色,一个没有经过填充,smooth_size都为100

接着,向大家展示的是色彩参考图与普通纹理贴图直接对齐后的效果,参数设置如下:smooth_size为这种处理方法很好地消除了色彩参考图的纹理线条信息,简化了图像,并非常简便且有效。

最后就随便给大家展示一下吧

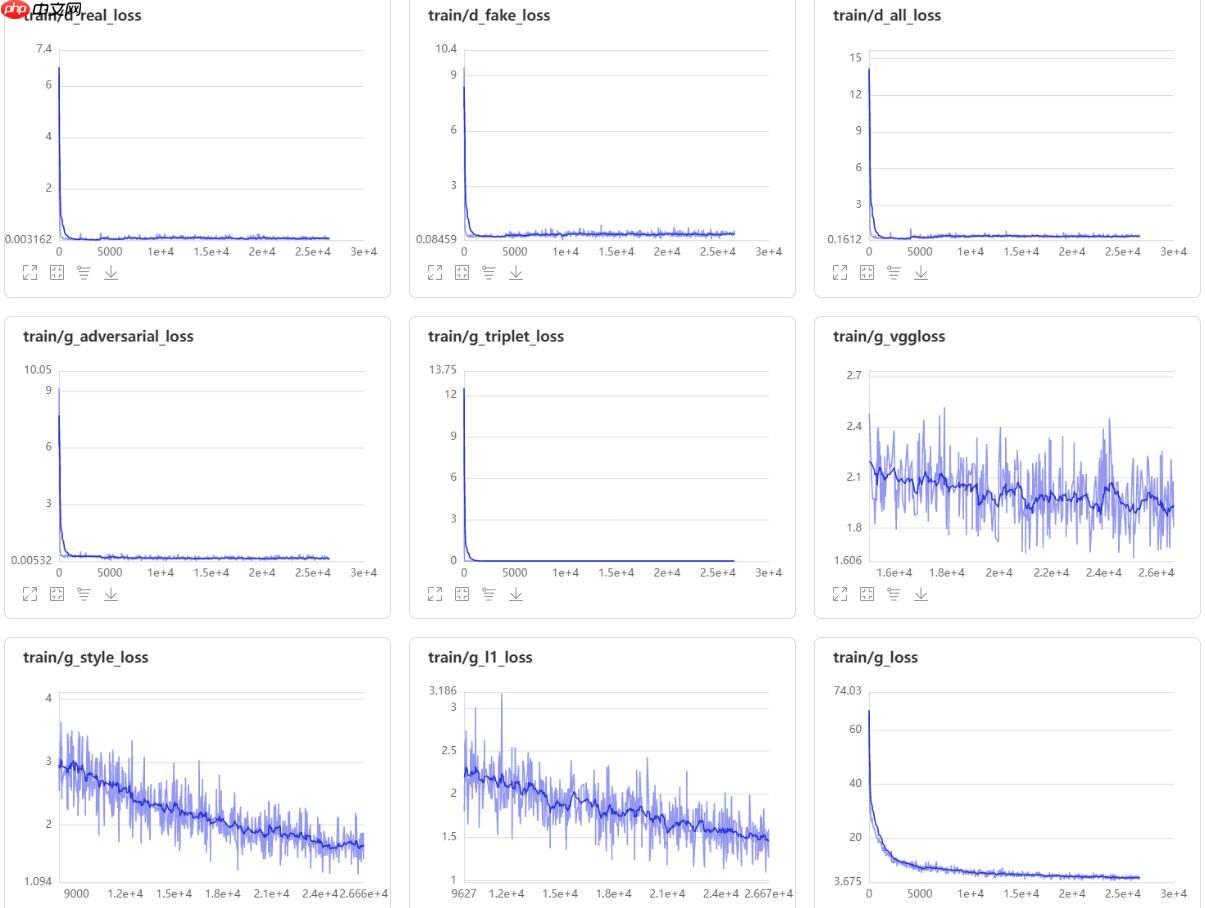

以下是训练的时候图片

4. loss展示

5. 代码展示

在第执行后,最后的部分是测试代码。这是为了让大家更轻松地尝试。

import cv2from PIL import Imagefrom paddle.vision.transforms import CenterCrop,Resizefrom paddle.vision.transforms import RandomRotation登录后复制 In [6]

''' V2版本我这里ResBlock的归一层使用的是BN,当时忘了改成IN了 '''import paddleimport paddle.nn as nnclass ResBlock(nn.Layer): def __init__(self, in_channels, out_channels, stride=1): super(ResBlock, self).__init__() def block(in_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False): layers = [] layers += [nn.Conv2D(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size, stride=stride, padding=padding, bias_attr =bias)] layers += [nn.InstanceNorm2D(num_features=out_channels)] layers += [nn.ReLU()] layers += [nn.Conv2D(in_channels=out_channels, out_channels=out_channels, kernel_size=kernel_size, stride=stride, padding=padding, bias_attr =bias)] layers += [nn.InstanceNorm2D(num_features=out_channels)] cbr = nn.Sequential(*layers) return cbr self.block_1 = block(in_channels,out_channels) self.block_2 = block(out_channels,out_channels) self.block_3 = block(out_channels,out_channels) self.block_4 = block(out_channels,out_channels) self.relu = nn.ReLU() def forward(self, x): # block 1 residual = x out = self.block_1(x) out = self.relu(out) # block 2 residual = out out = self.block_2(out) out += residual out = self.relu(out) # block 3 residual = out out = self.block_3(out) out += residual out = self.relu(out) # block 4 residual = out out = self.block_4(out) out += residual out = self.relu(out) return out x = paddle.randn([4,3,256,256]) ResBlock(3,7)(x).shape登录后复制

W0423 10:58:03.596845 10634 device_context.cc:447] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1 W0423 10:58:03.602501 10634 device_context.cc:465] device: 0, cuDNN Version: 7.6.登录后复制

[4, 7, 256, 256]登录后复制 In [7]

import paddleimport paddle.nn as nnclass Encoder(nn.Layer): def __init__(self, in_channels = 3): super(Encoder, self).__init__() def CL2d(in_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=True, LR_negative_slope=0.2): layers = [] layers += [nn.Conv2D(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size, stride=stride, padding=padding, bias_attr = bias)] layers += [nn.LeakyReLU(LR_negative_slope)] cbr = nn.Sequential(*layers) return cbr # conv_layer self.conv1 = CL2d(in_channels,16) self.conv2 = CL2d(16,16) self.conv3 = CL2d(16,32,stride=2) self.conv4 = CL2d(32,32) self.conv5 = CL2d(32,64,stride=2) self.conv6 = CL2d(64,64) self.conv7 = CL2d(64,128,stride=2) self.conv8 = CL2d(128,128) self.conv9 = CL2d(128,256,stride=2) self.conv10 = CL2d(256,256) # downsample_layer self.downsample1 = nn.AvgPool2D(kernel_size=16, stride=16) self.downsample2 = nn.AvgPool2D(kernel_size=8, stride=8) self.downsample3 = nn.AvgPool2D(kernel_size=4, stride=4) self.downsample4 = nn.AvgPool2D(kernel_size=2, stride=2) def forward(self, x): f1 = self.conv1(x) f2 = self.conv2(f1) f3 = self.conv3(f2) f4 = self.conv4(f3) f5 = self.conv5(f4) f6 = self.conv6(f5) f7 = self.conv7(f6) f8 = self.conv8(f7) f9 = self.conv9(f8) f10 = self.conv10(f9) F = [f9, f8, f7, f6, f5, f4, f3, f2 ,f1] v1 = self.downsample1(f1) v2 = self.downsample1(f2) v3 = self.downsample2(f3) v4 = self.downsample2(f4) v5 = self.downsample3(f5) v6 = self.downsample3(f6) v7 = self.downsample4(f7) v8 = self.downsample4(f8) V = paddle.concat((v1,v2,v3,v4,v5,v6,v7,v8,f9,f10), axis=1) h,w = V.shape[2],V.shape[3] V = paddle.reshape(V,(V.shape[0],V.shape[1],h*w)) V = paddle.transpose(V,[0,2,1]) return V,F,(h,w) x = paddle.randn([4,3,256,256]) a,b,_ = Encoder()(x)print(a.shape)登录后复制

[4, 256, 992]登录后复制 In [8]

class UNetDecoder(nn.Layer): def __init__(self): super(UNetDecoder, self).__init__() def CBR2d(in_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=True): layers = [] layers += [nn.Conv2D(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size, stride=stride, padding=padding, bias_attr=bias)] # layers += [nn.BatchNorm2D(num_features=out_channels)] layers += [nn.InstanceNorm2D(num_features=out_channels)] layers += [nn.ReLU()] cbr = nn.Sequential(*layers) return cbr self.dec5_1 = CBR2d(in_channels=992+992, out_channels=256) self.unpool4 = nn.Conv2DTranspose(in_channels=512, out_channels=512, kernel_size=2, stride=2, padding=0, bias_attr=True) self.dec4_2 = CBR2d(in_channels=512+128, out_channels=128) self.dec4_1 = CBR2d(in_channels=128+128, out_channels=128) self.unpool3 = nn.Conv2DTranspose(in_channels=128, out_channels=128, kernel_size=2, stride=2, padding=0, bias_attr=True) self.dec3_2 = CBR2d(in_channels=128+64, out_channels=64) self.dec3_1 = CBR2d(in_channels=64+64, out_channels=64) self.unpool2 = nn.Conv2DTranspose(in_channels=64, out_channels=64, kernel_size=2, stride=2, padding=0, bias_attr=True) self.dec2_2 = CBR2d(in_channels=64+32, out_channels=32) self.dec2_1 = CBR2d(in_channels=32+32, out_channels=32) self.unpool1 = nn.Conv2DTranspose(in_channels=32, out_channels=32, kernel_size=2, stride=2, padding=0, bias_attr=True) self.dec1_2 = CBR2d(in_channels=32+16, out_channels=16) self.dec1_1 = CBR2d(in_channels=16+16, out_channels=16) self.fc = nn.Conv2D(in_channels=16, out_channels=3, kernel_size=1, stride=1, padding=0, bias_attr=True) def forward(self, x, F): dec5_1 = self.dec5_1(x) unpool4 = self.unpool4(paddle.concat((dec5_1,F[0]),axis=1)) dec4_2 = self.dec4_2(paddle.concat((unpool4,F[1]),axis=1)) dec4_1 = self.dec4_1(paddle.concat((dec4_2,F[2]),axis=1)) unpool3 = self.unpool3(dec4_1) dec3_2 = self.dec3_2(paddle.concat((unpool3,F[3]),axis=1)) dec3_1 = self.dec3_1(paddle.concat((dec3_2,F[4]),axis=1)) unpool2 = self.unpool2(dec3_1) dec2_2 = self.dec2_2(paddle.concat((unpool2,F[5]),axis=1)) dec2_1 = self.dec2_1(paddle.concat((dec2_2,F[6]),axis=1)) unpool1 = self.unpool1(dec2_1) dec1_2 = self.dec1_2(paddle.concat((unpool1,F[7]),axis=1)) dec1_1 = self.dec1_1(paddle.concat((dec1_2, F[8]),axis=1)) x = self.fc(dec1_1) x = nn.Tanh()(x) return x登录后复制 In [9]

import mathimport paddle.nn.functional as Fclass SCFT(nn.Layer): def __init__(self, sketch_channels, reference_channels, dv=992): super(SCFT, self).__init__() self.dv = paddle.to_tensor(dv).astype("float32") self.w_q = nn.Linear(dv,dv) self.w_k = nn.Linear(dv,dv) self.w_v = nn.Linear(dv,dv) def forward(self, Vs, Vr,shape): h,w = shape quary = self.w_q(Vs) key = self.w_k(Vr) value = self.w_v(Vr) c = paddle.add(self.scaled_dot_product(quary,key,value), Vs) c = paddle.transpose(c,[0,2,1]) c = paddle.reshape(c,(c.shape[0],c.shape[1],h,w)) return c, quary, key, value def masked_fill(self,x, mask, value): y = paddle.full(x.shape, value, x.dtype) return paddle.where(mask, y, x) # https://www.quantumdl.com/entry/11%EC%A3%BC%EC%B0%A82-Attention-is-All-You-Need-Transformer def scaled_dot_product(self, query, key, value, mask=None, dropout=None): "Compute 'Scaled Dot Product Attention'" d_k = query.shape[-1] # print(key.shape) scores = paddle.matmul(query, key.transpose([0,2, 1])) \ / math.sqrt(d_k) if mask is not None: scores = self.masked_fill(scores,mask == 0, -1e9) p_attn = F.softmax(scores, axis = -1) if dropout is not None: p_attn = nn.Dropout(0.2)(p_attn) return paddle.matmul(p_attn, value)登录后复制 In [10]

import paddleimport paddle.nn as nnclass Generator(nn.Layer): def __init__(self, sketch_channels=1, reference_channels=3, LR_negative_slope=0.2): super(Generator, self).__init__() self.encoder_sketch = Encoder(sketch_channels) self.encoder_reference = Encoder(reference_channels) self.scft = SCFT(sketch_channels, reference_channels) self.resblock = ResBlock(992, 992) self.unet_decoder = UNetDecoder() def forward(self, sketch_img, reference_img): # encoder Vs, F,shape = self.encoder_sketch(sketch_img) Vr, _ ,_= self.encoder_reference(reference_img) # scft c, quary, key, value = self.scft(Vs,Vr,shape) # resblock c_out = self.resblock(c) # unet decoder I_gt = self.unet_decoder(paddle.concat((c,c_out),axis=1), F) return I_gt, quary, key, value登录后复制 In [11]

''' 注意,这里我使用了谱归一化(对于判别器),为了GAN训练更加稳定,谱归一化的介绍请看https://aistudio.baidu.com/aistudio/projectdetail/3438954这个项目 '''import paddleimport paddle.nn as nnfrom Normal import build_norm_layer SpectralNorm = build_norm_layer('spectral')# https://github.com/meliketoy/LSGAN.pytorch/blob/master/networks/Discriminator.py# LSGAN Discriminatorclass Discriminator(nn.Layer): def __init__(self, ndf, nChannels): super(Discriminator, self).__init__() # input : (batch * nChannels * image width * image height) # Discriminator will be consisted with a series of convolution networks self.layer1 = nn.Sequential( # Input size : input image with dimension (nChannels)*64*64 # Output size: output feature vector with (ndf)*32*32 SpectralNorm(nn.Conv2D( in_channels = nChannels, out_channels = ndf, kernel_size = 4, stride = 2, padding = 1, bias_attr = False )), nn.BatchNorm2D(ndf), nn.LeakyReLU(0.2) ) self.layer2 = nn.Sequential( # Input size : input feature vector with (ndf)*32*32 # Output size: output feature vector with (ndf*2)*16*16 SpectralNorm(nn.Conv2D( in_channels = ndf, out_channels = ndf*2, kernel_size = 4, stride = 2, padding = 1, bias_attr = False )), nn.BatchNorm2D(ndf*2), nn.LeakyReLU(0.2) ) self.layer3 = nn.Sequential( # Input size : input feature vector with (ndf*2)*16*16 # Output size: output feature vector with (ndf*4)*8*8 SpectralNorm(nn.Conv2D( in_channels = ndf*2, out_channels = ndf*4, kernel_size = 4, stride = 2, padding = 1, bias_attr = False )), nn.BatchNorm2D(ndf*4), nn.LeakyReLU(0.2) ) self.layer4 = nn.Sequential( # Input size : input feature vector with (ndf*4)*8*8 # Output size: output feature vector with (ndf*8)*4*4 SpectralNorm(nn.Conv2D( in_channels = ndf*4, out_channels = ndf*8, kernel_size = 4, stride = 2, padding = 1, bias_attr = False )), nn.BatchNorm2D(ndf*8), nn.LeakyReLU(0.2) ) self.layer5 = nn.Sequential( # Input size : input feature vector with (ndf*8)*4*4 # Output size: output probability of fake/real image SpectralNorm(nn.Conv2D( in_channels = ndf*8, out_channels = 1, kernel_size = 4, stride = 1, padding = 0, bias_attr = False )), # nn.Sigmoid() -- Replaced with Least Square Loss ) def forward(self, x): out = self.layer1(x) out = self.layer2(out) out = self.layer3(out) out = self.layer4(out) out = self.layer5(out) return out x = paddle.randn([4,3,256,256]) Discriminator(64,3)(x).shape登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/nn/layer/norm.py:653: UserWarning: When training, we now always track global mean and variance. "When training, we now always track global mean and variance.")登录后复制

[4, 1, 13, 13]登录后复制 In [12]

from VGG_Model import VGG19import paddle VGG = VGG19() x = paddle.randn([4,3,256,256]) b = VGG(x)for i in b: print(i.shape)登录后复制

[4, 64, 256, 256] [4, 128, 128, 128] [4, 256, 64, 64] [4, 512, 32, 32] [4, 512, 16, 16]登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/tensor/creation.py:130: DeprecationWarning: `np.object` is a deprecated alias for the builtin `object`. To silence this warning, use `object` by itself. Doing this will not modify any behavior and is safe. Deprecated in NumPy 1.20; for more details and guidance: https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations if data.dtype == np.object:登录后复制 In [13]

from visualdl import LogWriter log_writer = LogWriter("./log/gnet")登录后复制 In [14]

from paddle.vision.transforms import CenterCrop,Resize transform = Resize((512,512))#构造datasetIMG_EXTENSIONS = [ '.jpg', '.JPG', '.jpeg', '.JPEG', '.png', '.PNG', '.ppm', '.PPM', '.bmp', '.BMP', ]import paddleimport cv2import osdef data_maker(dir): images = [] assert os.path.isdir(dir), '%s is not a valid directory' % dir for root, _, fnames in sorted(os.walk(dir)): for fname in fnames: if is_image_file(fname) and ("outfit" not in fname): path = os.path.join(root, fname) images.append(path) return sorted(images)def is_image_file(filename): return any(filename.endswith(extension) for extension in IMG_EXTENSIONS)class AnimeDataset(paddle.io.Dataset): """ """ def __init__(self): super(AnimeDataset,self).__init__() self.anime_image_dirs =data_maker("data/d/data/train") self.size = len(self.anime_image_dirs) # cv2.imread直接读取为GBR,把通道换成RGB @staticmethod def loader(path): return cv2.cvtColor(cv2.imread(path, flags=cv2.IMREAD_COLOR), cv2.COLOR_BGR2RGB) def __getitem__(self, index): img = AnimeDataset.loader(self.anime_image_dirs[index]) img_a = img[:,:512,:] img_a =transform(img_a) img_b = img[:,512:,:] img_b = transform(img_b) appearance_img = img_a sketch_img = img_b affine_img = AffineTrans(img_a) reference_img = cv2.blur(affine_img,(100,100)) return appearance_img,sketch_img,reference_img def __len__(self): return self.size登录后复制 In [15]

for a,b,c in AnimeDataset(): print(a.shape,b.shape,c.shape) break登录后复制

(512, 512, 3) (512, 512, 3) (512, 512, 3)登录后复制 In [16]

batch_size = 16datas = AnimeDataset() data_loader = paddle.io.DataLoader(datas,batch_size=batch_size,shuffle =True,drop_last=True,num_workers=16)for input_img,sketch_img,reference_img in data_loader: print(input_img.shape,reference_img.shape) break登录后复制

[16, 512, 512, 3] [16, 512, 512, 3]登录后复制 In [17]

generator = Generator() discriminator = Discriminator(16,7)登录后复制 In [18]

scheduler_G = paddle.optimizer.lr.StepDecay(learning_rate=1e-4, step_size=3, gamma=0.9, verbose=True) scheduler_D = paddle.optimizer.lr.StepDecay(learning_rate=2e-4, step_size=3, gamma=0.9, verbose=True) optimizer_G = paddle.optimizer.Adam(learning_rate=scheduler_G,parameters=generator.parameters(),beta1=0.5, beta2 =0.999) optimizer_D = paddle.optimizer.Adam(learning_rate=scheduler_D,parameters=discriminator.parameters(),beta1=0.5, beta2 =0.999)登录后复制

Epoch 0: StepDecay set learning rate to 0.0001. Epoch 0: StepDecay set learning rate to 0.0002.登录后复制 In [19]

# # model和discriminator参数文件导入# M_path ='model_params/Mmodel_state3.pdparams'# layer_state_dictm = paddle.load(M_path)# generator.set_state_dict(layer_state_dictm)# D_path ='discriminator_params/Dmodel_state3.pdparams'# layer_state_dictD = paddle.load(D_path)# discriminator.set_state_dict(layer_state_dictD)登录后复制 In [20]

EPOCHEES = 30i = 0save_dir_model = "model_params"save_dir_Discriminator = "discriminator_params"登录后复制 In [21]

def gram(x): b, c, h, w = x.shape x_tmp = x.reshape((b, c, (h * w))) gram = paddle.matmul(x_tmp, x_tmp, transpose_y=True) return gram / (c * h * w)def style_loss(fake, style): gram_loss = nn.L1Loss()(gram(fake), gram(style)) return gram_loss登录后复制 In [22]

def scaled_dot_product(query, key, mask=None, dropout=None): "Compute 'Scaled Dot Product Attention'" d_k = query.shape[-1] scores = paddle.matmul(query, key.transpose([0,2, 1])) \ / math.sqrt(d_k) return scores triplet_margin = 12def similarity_based_triple_loss(anchor, positive, negative): distance = scaled_dot_product(anchor, positive) - scaled_dot_product(anchor, negative) + triplet_margin loss = paddle.mean( paddle.maximum(distance, paddle.zeros_like(distance))) return loss登录后复制 In [23]

from tqdm import tqdm登录后复制

以下就是训练代码,这里我直接注释了,这样大家就可以一键运行,直接测试了

本文详细介绍了训练代码的具体细节,与V本相比([fake_I_gt, sketch_img]),我为判别器输入了[fake_I_gt, sketch_img, reference_img],即多了一层色彩信息。这是基于colorgan改进的结果,因为从conditional GAN的角度看,我需要判别器去判断生成的图片色彩和线稿结构是否合理,即提供给判别器先验的色彩信息,从而达到更好的训练效果。以下是我在[中提到的内容。

# # 训练代码,如果想训练就取消注释# adversarial_loss = paddle.nn.MSELoss()# l1_loss = nn.L1Loss()# step =0# for epoch in range(EPOCHEES):# # if(step >1000):# # break# for appearance_img, sketch_img,reference_img in tqdm(data_loader):# # try:# # if(step >1000):# # break# # print(input_img.shape,mask.shape)# appearance_img =paddle.transpose(x=appearance_img.astype("float32")/127.5-1,perm=[0,3,1,2])# # color_noise = paddle.tanh(paddle.randn(shape = appearance_img.shape))# # appearance_img += color_noise# # appearance_img = paddle.tanh(appearance_img)# sketch_img = paddle.max( paddle.transpose(x=sketch_img.astype("float32")/255,perm=[0,3,1,2]),axis=1,keepdim=True)# reference_img = paddle.transpose(x=reference_img.astype("float32")/127.5-1,perm=[0,3,1,2])# # ---------------------# # Train Generator# # ---------------------# fake_I_gt, quary, key, value = generator(sketch_img,reference_img)# fake_output = discriminator(paddle.concat((fake_I_gt,sketch_img,reference_img), axis=1))# g_adversarial_loss = adversarial_loss(fake_output,paddle.ones_like(fake_output))# g_l1_loss = l1_loss(fake_I_gt, appearance_img)*20# g_triplet_loss = similarity_based_triple_loss(quary, key, value)# g_vggloss = paddle.to_tensor(0.)# g_style_loss= paddle.to_tensor(0.)# rates = [1.0 / 32, 1.0 / 16, 1.0 / 8, 1.0 / 4, 1.0]# # _, fake_features = VGG( paddle.multiply (img_fake,loss_mask))# # _, real_features = VGG(paddle.multiply (input_img,loss_mask))# fake_features = VGG(fake_I_gt)# real_features = VGG(appearance_img)# for i in range(len(fake_features)):# a,b = fake_features[i], real_features[i]# # if i ==len(fake_features)-1:# # a = paddle.multiply( a,F.interpolate(loss_mask,a.shape[-2:]))# # b = paddle.multiply( b,F.interpolate(loss_mask,b.shape[-2:]))# g_vggloss += rates[i] * l1_loss(a,b)# g_style_loss += rates[i] * style_loss(a,b) # g_vggloss /=30# g_style_loss/=10# # print(step,"g_adversarial_loss",g_adversarial_loss.numpy()[0],"g_triplet_loss",g_triplet_loss.numpy()[0],"g_vggloss",g_vggloss.numpy()[0],"g_styleloss", \# # g_style_loss.numpy()[0],"g_l1_loss",g_l1_loss.numpy()[0],"g_loss",g_loss.numpy()[0])# g_loss = g_l1_loss + g_triplet_loss + g_adversarial_loss + g_style_loss + g_vggloss # g_loss.backward()# optimizer_G.step()# optimizer_G.clear_grad() # # ---------------------# # Train Discriminator# # ---------------------# fake_output = discriminator(paddle.concat((fake_I_gt.detach(),sketch_img,reference_img), axis=1))# real_output = discriminator(paddle.concat((appearance_img,sketch_img,reference_img), axis=1))# d_real_loss = adversarial_loss(real_output, paddle.ones_like(real_output))# d_fake_loss = adversarial_loss(fake_output, paddle.zeros_like(fake_output))# d_loss = d_real_loss+d_fake_loss # d_loss.backward()# optimizer_D.step()# optimizer_D.clear_grad()# if step%2==0:# log_writer.add_scalar(tag='train/d_real_loss', step=step, value=d_real_loss.numpy()[0])# log_writer.add_scalar(tag='train/d_fake_loss', step=step, value=d_fake_loss.numpy()[0]) # log_writer.add_scalar(tag='train/d_all_loss', step=step, value=d_loss.numpy()[0]) # # log_writer.add_scalar(tag='train/col_loss', step=step, value=col_loss.numpy()[0])# log_writer.add_scalar(tag='train/g_adversarial_loss', step=step, value=g_adversarial_loss.numpy()[0])# log_writer.add_scalar(tag='train/g_triplet_loss', step=step, value=g_triplet_loss.numpy()[0])# log_writer.add_scalar(tag='train/g_vggloss', step=step, value=g_vggloss.numpy()[0])# log_writer.add_scalar(tag='train/g_style_loss', step=step, value=g_style_loss.numpy()[0])# log_writer.add_scalar(tag='train/g_l1_loss', step=step, value=g_l1_loss.numpy()[0])# log_writer.add_scalar(tag='train/g_loss', step=step, value=g_loss.numpy()[0])# step+=1# # print(i)# if step%100 == 3:# print(step,"g_adversarial_loss",g_adversarial_loss.numpy()[0],"g_triplet_loss",g_triplet_loss.numpy()[0],"g_vggloss",g_vggloss.numpy()[0],"g_styleloss", \# g_style_loss.numpy()[0],"g_l1_loss",g_l1_loss.numpy()[0],"g_loss",g_loss.numpy()[0])# print(step,"dreal_loss",d_real_loss.numpy()[0],"dfake_loss",d_fake_loss.numpy()[0],"d_all_loss",d_loss.numpy()[0])# # img_fake = paddle.multiply (img_fake,loss_mask)# appearance_img = (appearance_img+1)*127.5# reference_img = (reference_img+1)*127.5# fake_I_gt = (fake_I_gt+1)*127.5# g_output = paddle.concat([appearance_img,reference_img,fake_I_gt],axis = 3).detach().numpy() # tensor -> numpy# g_output = g_output.transpose(0, 2, 3, 1)[0] # NCHW -> NHWC# g_output = g_output.astype(np.uint8)# cv2.imwrite(os.path.join("./result", 'epoch'+str(step).zfill(3)+'.png'),cv2.cvtColor(g_output,cv2.COLOR_RGB2BGR))# # generator.train() # if step%100 == 3:# # save_param_path_g = os.path.join(save_dir_generator, 'Gmodel_state'+str(step)+'.pdparams')# # paddle.save(model.generator.state_dict(), save_param_path_g)# save_param_path_d = os.path.join(save_dir_Discriminator, 'Dmodel_state'+str(3)+'.pdparams')# paddle.save(discriminator.state_dict(), save_param_path_d)# # save_param_path_e = os.path.join(save_dir_encoder, 'Emodel_state'+str(1)+'.pdparams')# # paddle.save(model.encoder.state_dict(), save_param_path_e)# save_param_path_m = os.path.join(save_dir_model, 'Mmodel_state'+str(3)+'.pdparams')# paddle.save(generator.state_dict(), save_param_path_m)# # break# # except:# # pass# # break# scheduler_G.step()# scheduler_D.step()登录后复制 In [25]

''' 测试代码,这次我会讲解的更加详细,数据集的详细介绍我已经在V2介绍过了 '''model = Generator() M_path ='Mmodel_state3.pdparams'layer_state_dictm = paddle.load(M_path) model.set_state_dict(layer_state_dictm)''' 构造色彩参考图 '''path2 ="data/d/data/train/2539033.png"path2 = "test/纹理1.jpg"img_a = cv2.cvtColor(cv2.imread(path2, flags=cv2.IMREAD_COLOR),cv2.COLOR_BGR2RGB)from paddle.vision.transforms import CenterCrop,Resize transform = Resize((512,512))# img_a = img_a[:,:512,:] #如果输入的色彩图是训练集的GT,那就把这行取消注释,如果是其他自己找的就保持注释img_a =transform(img_a)##设置30个50*50为白色,如果觉得有的色彩泄露就把23到26行代码取消注释可以一定情况下缓解。# for i in range(30):# randx = randint(50,400)# randy = randint(0,450)# img_a[randx:randx+50,randy:randy+50] = 255 #将像素设置成255,为白色# img_a = AffineTrans(img_a) #测试的时候不需要进行仿射变换了img_a = cv2.blur(img_a,(100,100)) #关键模糊步骤reference_img =paddle.transpose(x=paddle.to_tensor(img_a).unsqueeze(0).astype("float32")/127.5-1,perm=[0,3,1,2]) #style''' 构造线稿图 '''path2 ="data/d/data/train/2537028.png"img = cv2.cvtColor(cv2.imread(path2, flags=cv2.IMREAD_COLOR),cv2.COLOR_BGR2RGB) img_b = img[:,512:,:] img_b = transform(img_b) sketch_img0 =paddle.transpose(x=paddle.to_tensor(img_b).unsqueeze(0).astype("float32"),perm=[0,3,1,2])#contentsketch_img = paddle.max( sketch_img0/255,axis=1,keepdim=True) img_fake,_,_,_= model(sketch_img,reference_img)print('img_fake',img_fake.shape) img_fake = img_fake.transpose([0, 2, 3, 1])[0].numpy() # NCHW -> NHWCprint(img_fake.shape) img_fake = (img_fake+1) *127.5reference_img = (reference_img+1)*127.5sketch_img0 = sketch_img0.transpose([0, 2, 3, 1])[0].numpy() reference_img = reference_img.transpose([0, 2, 3, 1])[0].numpy() g_output = np.concatenate((sketch_img0,reference_img,img_fake),axis =1) g_output = g_output.astype(np.uint8) cv2.imwrite(os.path.join("./test", " 10000.png"), cv2.cvtColor(g_output,cv2.COLOR_RGB2BGR))登录后复制

img_fake [1, 3, 512, 512] (512, 512, 3)登录后复制

True登录后复制

以上就是线稿上色V3(比V2差别在于这个参考图的处理方式),并且更好用哦的详细内容,更多请关注其它相关文章!